RealityKit

Compiling .reality: The Technical Reality

Xcode runs a verified two-pass process to compile .reality files: first generating USD schemas from your Swift components, then compiling them into optimized bundles.

RealityKit

Xcode runs a verified two-pass process to compile .reality files: first generating USD schemas from your Swift components, then compiling them into optimized bundles.

WWDC

TL;DR * Spatial computing is in a transitional phase: the technology is robust, but the market and use cases are still catching up. * Apple’s approach is methodical, not flashy: rather than chasing hype, Apple is building the infrastructure—standards, APIs, specifications, developer tools—that will power the next wave

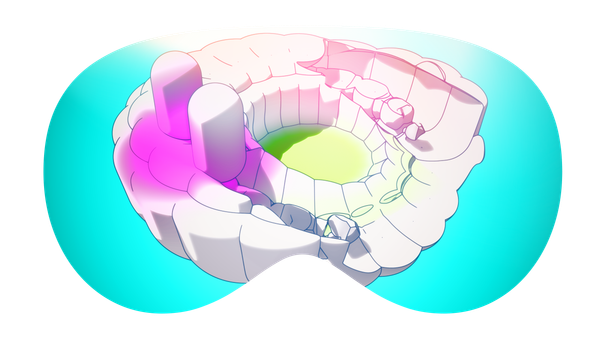

ARKit

TL;DR, If you want virtual environment light gradually replacing your real-world shaded objects, use Virtual Environment Probe. Achieving visual realism for virtual elements involves many parts, but lighting stands out as particularly challenging, especially in real-time rendering for platforms like visionOS and RealityKit. Material surface behavior in response to

TabletopKit

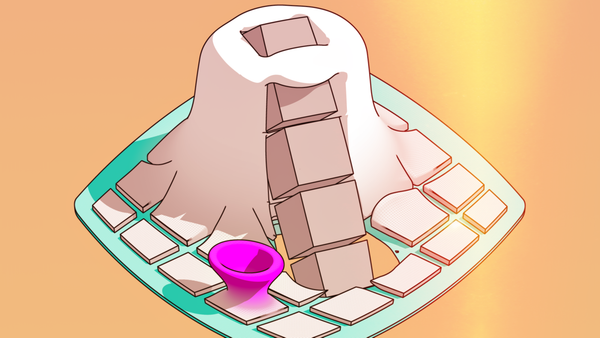

TL;DR, If you are interested in building a spatial game (or just about any immersive experience), you'll probably want to implement an architecture like TabletopKit. Create multiplayer spatial games on a virtual table surface and use FaceTime to invite players. TabletopKit first impressions We are creating a

RealityKit

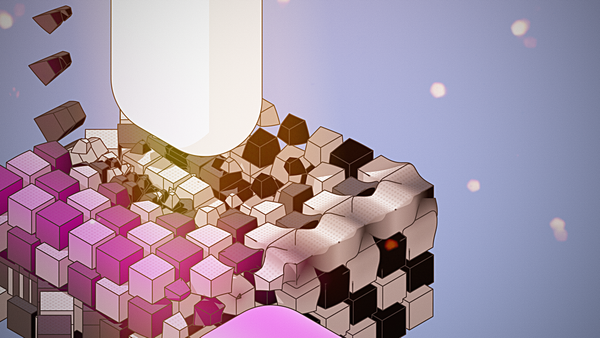

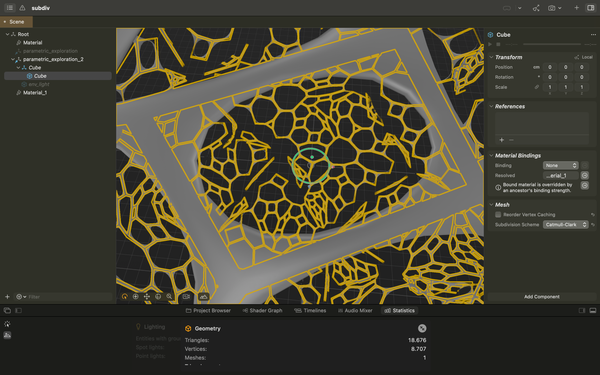

TL;DR: SubD can currently only be activated on Reality Composer Pro and for geometry using physically based (not custom) materials. While working with 3D, especially for real-time rendering engines like RealityKit (the current engine powering visionOS), balancing geometry, material types, sizes, and render passes is critical for creating an

RCP

Reality Composer from macOS (not Pro) was quietly sunset not too long ago. Aside from being a graphical editor that allowed for basic scene setup, it also had other great utilities, such as cross-platform preview and some popular features that allowed for creating interaction with triggers and events. Reality Composer

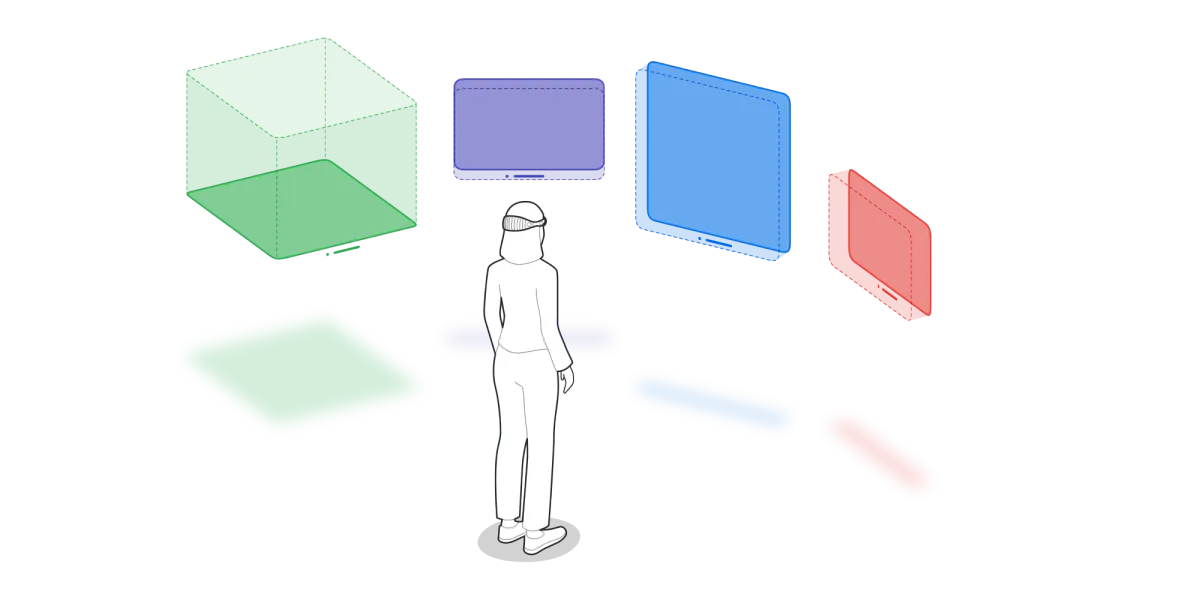

Spatial Computing

Linda Dong and Mike Stern of Apple's Design Evangelism team gave a talk at Config 2024. The presentation's premise was already intriguing: how spatial computing unlocks new ways to experience and interact with user interfaces. The presentation video took some days after the event to be

visionOS

In visionOS, there's something about docking videos in immersive environments (anchoring them like a screen in a movie theater) that makes them feel much more present and impressive. Even though this has being limited to a select few apps and the system has the control over the location,

WWDC

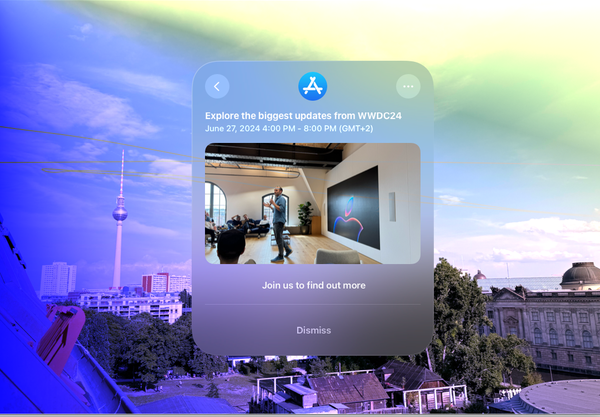

TL;DR: A minimalistic, direct, and carefully arranged Apple get together with great attendees Some days ago, I attended an Apple event in Berlin, which left me with a great feeling. The in-person session took place in a lovely area near the museum island, which I often visit but apparently

visionOS

Tom mentioned it, so here's a little test of the sample code for the new visionOS 2.0 room tracking capability. The project demonstrates how to construct an ARKit session with a RoomTrackingProvider, which allows to check if any point in space is contained to the current room.

WWDC

Introduction In a wearable, highly sensitive information access platform where consumers and customers frequently question what the actual use cases are, how do you maintain a balance between protecting privacy and enabling all of the potential use cases that developers come up with? That is exactly what WWDC24 is about.

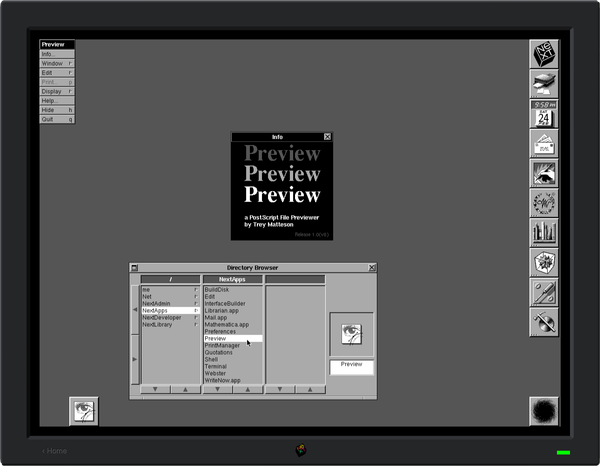

macOS

When you open .usd in Preview, you can do some cool things with it

SwiftUI

We have this feature in a visionOS app that allows users to select videos from their library using the PhotosPicker from PhotoKit. Overall, its implementation is simple and effective, especially given everything that is going on behind the scenes. Once a video is selected, we wanted to avoid restricting the

SwiftUI

One common issue encountered when developing for visionOS is that all measurements are displayed in imperial units by default. This is because there are still no settings for Language & Region. To allow users to select the measurement unit type without having to set the locale, formatters must be used

Accessibility

I have been rethinking the challenges that some teams face when incorporating accessibility into existing pipelines. It seems that we (people involved in making products) will require a development methodology that is accessibility-driven and involves the execution of automated accessibility audits during testing. Apple made some hints at the Perform

visionOS

Observing the ScenePhase in the environment has demonstrated to be a far more reliable technique for determining if an ImmersiveSpace is active or not. This is particularly helpful for making accessibility announcements, as it allows the user to adjust to the context switch. import SwiftUI @main struct ImmersiveScenePhaseApp: App { @Environment(